Reddit Prompt Engineering: What People Really Think In 2026

At AI Flow Chat

Contents

0%If you've spent any time searching for honest takes on prompt engineering, you've probably ended up on Reddit. No surprise there. Reddit prompt engineering threads are where practitioners, skeptics, and hobbyists hash out what actually works, without the polished veneer of LinkedIn posts or paid courses trying to sell you something.

But scrolling through dozens of subreddits to piece together a clear picture is a time sink. Opinions contradict each other. Threads get buried. What was relevant six months ago might already be outdated, especially as AI models keep shifting what "good prompting" even means. Some Redditors swear prompt engineering is a dead-end skill. Others argue it's more critical than ever. The truth, as usual, sits somewhere in the middle, and depends heavily on how you're actually using prompts in your work.

That's something we think about constantly at AI Flow Chat, where our entire platform is built around turning prompts into repeatable, visual workflows. We watch these Reddit debates closely because they reflect real friction points our users face: how to get consistent outputs, how to reference multiple sources without losing context, and how to move beyond basic chat interfaces. So we pulled together the most useful insights, recurring advice, and genuine criticisms from Reddit's prompt engineering communities to give you a single, honest breakdown of where things stand right now.

Here's what people actually think, and what's worth paying attention to.

What Reddit means by prompt engineering in 2026

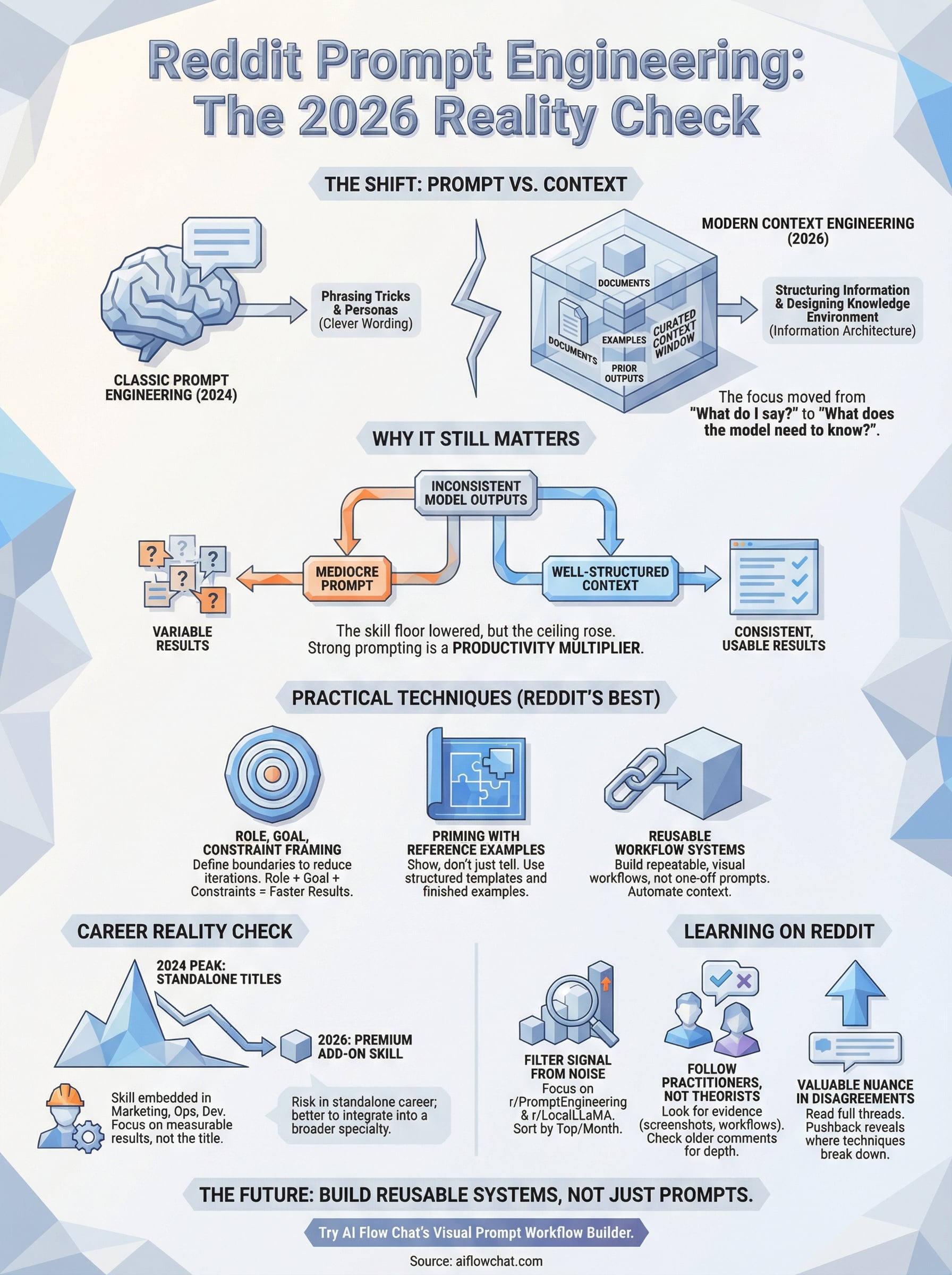

The definition has shifted. Two years ago, prompt engineering meant crafting clever instructions to coax a model into producing a usable output. Today, Reddit's technical communities draw a harder line between "prompt engineering" and what they're increasingly calling context engineering, and the distinction matters if you want to use AI seriously in your work.

The old definition vs. what Reddit argues now

The classic version of prompt engineering focused on phrasing tricks: adding "think step by step," specifying a persona, or using few-shot examples. That still works, but reddit prompt engineering discussions in 2026 treat those basics as table stakes, not skills. The consensus in communities like r/PromptEngineering and r/LocalLLaMA is that the real craft now sits in structuring the information you feed the model, not just how you phrase the ask. Users who still chase clever wording without managing context are getting increasingly inconsistent results.

The biggest shift in these communities is the move from "what do I say to the model?" to "what does the model need to know before I say anything?"

Where context engineering enters the conversation

Context engineering is the practice of deliberately curating what goes into a model's context window before you prompt it. This includes deciding which documents, examples, or prior outputs to include, in what order, and at what level of detail. Reddit threads from early 2026 show practitioners treating this like information architecture. You are not just writing a prompt; you are designing the knowledge environment the model works inside. For teams running repeatable workflows, this means building systems where the right context arrives automatically rather than relying on a human to reconstruct it every session. That shift from one-off prompts to structured, reusable input frameworks is where most serious practitioners now spend their energy.

Why Reddit still cares about prompt engineering

The short answer is that model outputs are still inconsistent, and Redditors who rely on AI for real work feel that inconsistency daily. Debates about whether prompt engineering is "dead" resurface regularly, but they consistently get shut down by practitioners who share concrete before-and-after examples. The community stays engaged because the gap between a mediocre prompt and a well-structured one still produces dramatically different results, regardless of how capable the underlying model gets.

The more powerful the model, the more a bad prompt wastes its potential.

The "is it a real skill" debate

Reddit keeps returning to this question because the answer is genuinely complicated. Some users point out that frontier models like GPT-4o and Claude 3.7 are more forgiving of sloppy prompts than earlier versions were. Others counter that forgiving sloppy prompts is not the same as reaching optimal outputs.

In reddit prompt engineering communities, the working consensus is that strong prompting remains a productivity multiplier even when it is no longer a hard requirement for getting something usable out of the model. The skill floor has lowered, but the ceiling has risen just as fast, which is exactly why practitioners keep showing up to trade notes.

Practical techniques Reddit says actually work

Skip the philosophy for a moment. Across reddit prompt engineering threads, a handful of techniques show up repeatedly because practitioners report they consistently produce better outputs. These are not hacks; they are structural habits that serious users have field-tested and shared with concrete results attached.

Role, goal, and constraint framing

The most cited pattern is a three-part opener: tell the model what role it should occupy, state the specific goal you need reached, then add constraints that rule out outputs you do not want. Reddit users report this reduces back-and-forth significantly because the model has a bounded space to work within from the start. Fewer revision cycles mean faster results, which matters when you are running workflows at volume.

A bounded model is a faster model; giving it a role and a goal cuts the number of iterations in half.

Feeding reference examples before asking

Front-loading examples before the actual request is another technique that keeps surfacing. Rather than describing what a good output looks like, you show it. Reddit practitioners call this "priming the context," and it connects directly to the context engineering shift discussed earlier. Useful formats for this include:

- A finished piece of writing you want to match in tone

- A competitor post you want to reframe in your own voice

- A structured output template the model should populate

Career reality check: roles, pay, and longevity

Reddit's career threads on prompt engineering are some of the most heated on the topic. Job postings for "prompt engineers" peaked in 2024 and have since consolidated, with many companies absorbing the function into existing roles rather than hiring dedicated specialists. That does not mean the skill is worthless; it means the market has recalibrated around who actually does the work.

What the job market actually looks like

Most practitioners in reddit prompt engineering career threads report that standalone prompt engineer titles are rare outside of AI labs and large enterprise teams. Instead, the skill shows up as a premium add-on within marketing, operations, and software roles. If you can demonstrate that your prompting discipline cuts workflow time or improves output quality at scale, that is a tangible differentiator employers notice.

The practitioners earning the most are not selling "prompt engineering" as a title; they are selling measurable results that happen to come from strong prompting habits.

Is this a long-term career path?

Reddit's honest consensus is that treating prompt engineering as a standalone career bet carries real risk. Models will keep getting better at interpreting loose instructions, which narrows the gap between average and skilled prompting over time. Your safer play is to embed prompt skills into a broader specialty, whether that is content strategy, marketing automation, or AI workflow design, rather than staking your career identity on the label alone.

How to learn faster using Reddit without wasting time

Reddit rewards people who know where to look. Subreddits like r/PromptEngineering and r/LocalLLaMA concentrate the most practical signal, but even there you need a filter. Sorting by "Top" within the past month cuts through noise faster than browsing "New," and searching for specific use cases rather than general questions returns threads with actual working examples attached.

Follow practitioners, not theorists

The accounts worth your attention share results with evidence: screenshots, before-and-after outputs, or workflows they actually run in production. Bookmark users who post that way and check their comment history. Their older contributions often contain more depth than any single post, and you will find techniques that never made it into popular threads.

Treat upvoted comments as your curriculum

Top-voted comments in reddit prompt engineering threads give you a fast filter for community-validated advice. Read the full comment thread below each one, not just the original post. Disagreements in the replies often surface the most nuanced corrections, and those corrections tell you exactly where a technique breaks down in practice. That context separates someone who skimmed Reddit from someone who actually learned from it.

The real learning is in the pushback, not the original post.

Where to go next

Reddit prompt engineering communities give you a real-time pulse on what practitioners actually use, argue about, and throw out. The through-line across everything covered here is that the skill is moving from clever phrasing toward structured context management, and the people getting the most consistent results are the ones building repeatable systems rather than crafting one-off prompts from scratch every time.

Your next move is to stop treating prompts as individual messages and start treating them as reusable building blocks inside a larger workflow. That shift changes how fast you can produce quality output and how easy it becomes to replicate what works. If you want a workspace designed specifically for that, try AI Flow Chat's visual prompt workflow builder to connect your sources, prompts, and outputs on one canvas. Consistent results come from consistent systems, and that is exactly what the best Reddit practitioners keep proving out.

Continue Reading

Discover more insights and updates from our articles

Writing SEO content without a clear brief is like building a house without blueprints, you'll waste time, miss key details, and end up reworking most of it. A good content brief generator takes the gu...

Every manual task inside your CRM, updating a lead status, sending a follow-up email, assigning a case to the right rep, costs time you could spend on work that actually moves revenue. Salesforce work...

Most people use "content strategy" and "content marketing strategy" interchangeably. They're not the same thing. The difference between them isn't just semantics, it affects how yo...